Why Our AI Cites Its Sources -- and How We Wired It Through Claude's API

When an AI agent answers a customer's question on your behalf, you have a problem most customer-facing AI tools don't solve: you have no idea where the answer came from.

Did the agent read your business hours from the document you uploaded? Did it pull the price from the product catalog? Did it remember something the customer said two messages ago? Or did it make it up?

For a tool that represents your business to strangers, "I don't know where it got that" is not an acceptable answer. So we wired Claude's citations API into our agent, and now the portal shows you the source of every claim.

What Citations Actually Are

Claude's API supports a feature most people building with it haven't turned on yet: if you pass your knowledge sources as structured document blocks instead of jamming them into the system prompt as text, the model returns citations. Not as a separate post-processing step. Not as a regex match against the response. The citations come back from the model, attached to the specific spans of generated text they support.

When the agent says "We're open Monday through Friday, 9 to 5," the API returns that text annotated with a pointer to the document block that said so. The portal renders that as a clickable badge next to the sentence.

You click the badge. You see the document. You see the line. You see why the AI said what it said.

Why We Did This

The trust layer thesis is that public-facing AI is a different category from copilots and internal tools. The customer can't see the system prompt. The customer doesn't know what documents the agent has access to. The customer is talking to your business, not to an AI lab's research preview.

That puts the entire burden of trust on you, the owner. You're the one who has to know whether the AI is faithfully representing your business or quietly inventing details that will eventually cost you a customer or a lawsuit. The Air Canada chatbot case — where a court forced the airline to honor a refund policy its agent invented — is the canonical example. The agent said it. The customer believed it. The company was bound by it.

The defense against that isn't smarter prompts. The defense is making the source of every claim visible to the owner, so the owner can spot the gap between what the AI said and what the documents actually contain.

How It Works

Inside the agent executor, we restructured the system prompt. The instructions and persona stay as text. But the four knowledge sources — the contact profile (what the agent knows about this specific customer), the business hours, the business knowledge base, and the owner's custom instructions — are now wrapped as Claude API document blocks, each with a title and a content body.

const documents = [

{ type: 'document', title: 'Contact profile', source: { ... } },

{ type: 'document', title: 'Business hours', source: { ... } },

{ type: 'document', title: 'Business knowledge', source: { ... } },

{ type: 'document', title: 'Owner instructions', source: { ... } },

]

When the agent generates a response, Claude returns the text in segments. Some segments are uncited (the agent's own reasoning, conversational filler). Other segments come back tagged with citations pointing at the document blocks they were grounded in.

The portal chat receives both the segments and the citations. It renders the cited spans with a small inline marker. Click the marker, get a tooltip. The tooltip shows the source document name and the relevant excerpt.

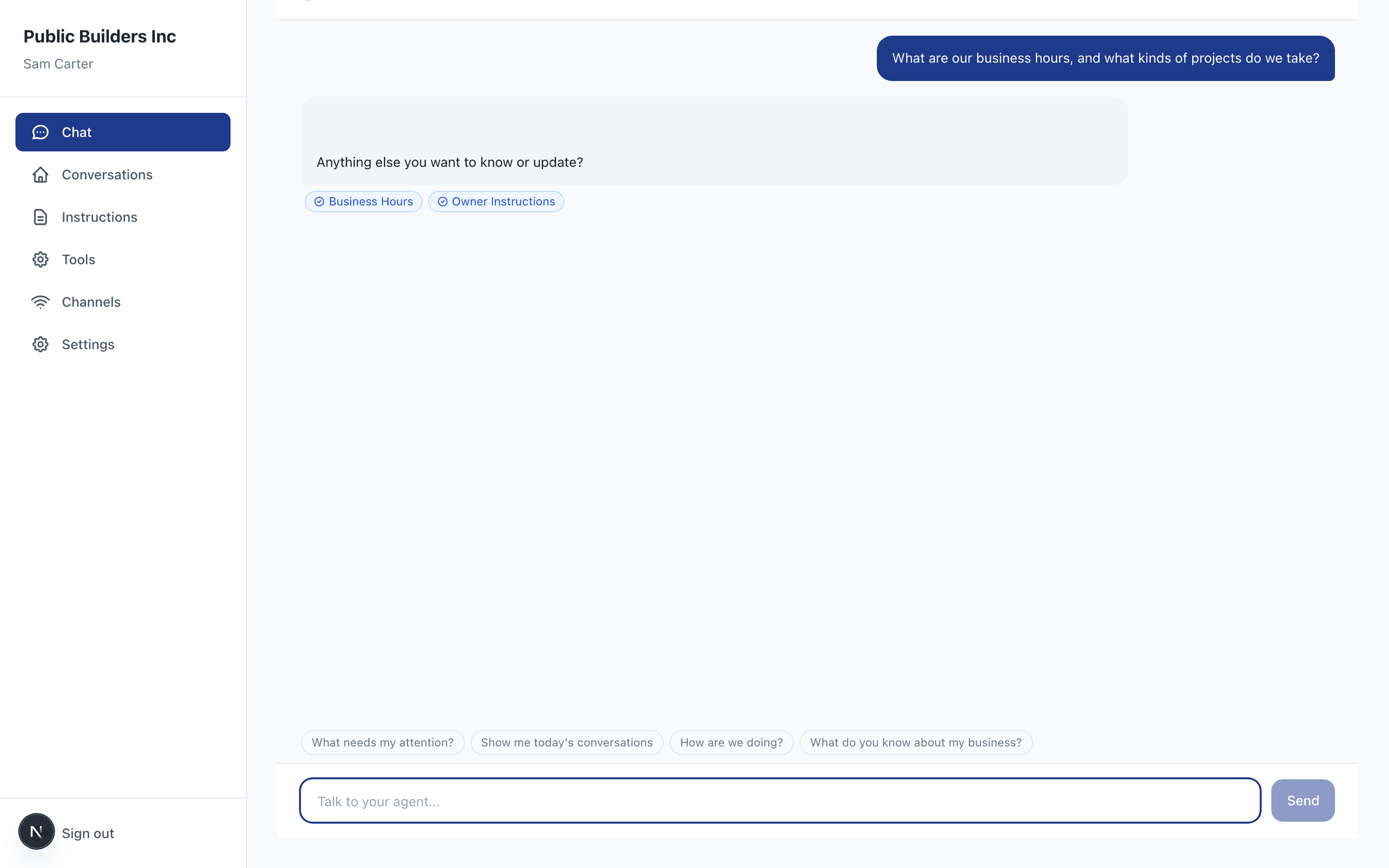

What Owners Actually See

Open the portal, look at a conversation, and the cited claims have small badges beside them: [Business hours], [Contact profile], [Owner instructions]. Hover or tap, and you see exactly which document the AI used.

When something looks wrong — when the AI quoted a price you didn't authorize, or claimed a service you don't offer — you click the badge. Either it points to a document that does say that (and now you know your knowledge base is wrong), or it points to nothing (and now you know the AI is generalizing past its sources). Either way, you have a debugging path that doesn't require reading prompts or scrolling through logs.

Why This Matters More Than It Looks

Most AI products treat citations as a feature. We treat them as a trust primitive.

Once the source of every claim is visible, three things become possible that weren't before:

Owners can audit without engineering help. A plumber doesn't need to know what a system prompt is to look at a conversation, see a cited claim, click it, and decide whether the source is accurate. The trust loop runs through the owner, not through us.

The agent's mistakes become legible. When the AI is wrong, it's wrong in a specific way: either the source was wrong, or the agent stretched past the source. Both are fixable. Neither requires guessing.

The knowledge base improves through use. Every cited claim is a vote that the document was useful. Every uncited claim is a vote that the AI is reasoning from somewhere we can't see — usually a sign the knowledge base has a gap. Over time, the cited/uncited ratio becomes a direct measure of how well the documents are doing their job.

What's Next

Citations are the foundation for the trust layer's labeling loop. When the owner clicks a citation and corrects the source, that's a labeling signal — captured, stored, and used to improve how the AI grounds future claims. We're already capturing these signals through the trust layer interfaces; citations make them spatially obvious.

The longer arc: every customer-facing AI deployment should be able to answer the question "where did that come from?" for any claim it makes. Not as a UX nicety. As a precondition for putting AI in front of the public at all.

If you're building with Claude and you haven't turned citations on, this is the easiest infrastructure improvement you can make in an afternoon. Stop putting your knowledge in the system prompt as text. Pass it as document blocks. Render the citations the API gives you back. Your users will trust you more, and you'll trust your AI more, for the same reason: everyone can see where the words came from.

Stop losing jobs to missed calls

AI texts your missed callers back in 30 seconds. Real conversations, not templates. 14-day free trial — no card required.

Related Articles

Risk Scoring for Public-Facing AI: Eight Dimensions, Compound Scores, Hard Stops

A scoring engine that evaluates AI deployments on eight independent risk dimensions, combines them with a weighted geometric mean, and hard-stops dangerous combinations. Runs inside MCP write tools so every configuration change is evaluated before it ships.

Progressive Disclosure as Data Labeling: A Different Kind of AI Safety Loop

When a configuration change shifts a deployment's risk profile, the trust layer doesn't block -- it generates a contextual interface that explains what changed, captures the owner's response as labeling signal, and applies the change with the guardrails they just configured.

Why We Don't Sanitize User Messages in Our AI Agent

The correct boundary for prompt injection defense is between system content and user content -- not between safe and unsafe words. Here's why regex filters on user input do more harm than good.